HIPAA-Compliant Chatbot

SmartBot360™ chats are HIPAA-compliant, by properly handling sensitive health & medical data, including protected health information (PHI).

SmartBot360 is a healthcare-focused chatbot and we’ve worked with hospitals, networks, small offices, and more for years to achieve the most frictionless HIPAA-compliant chat & chatbot on the market.

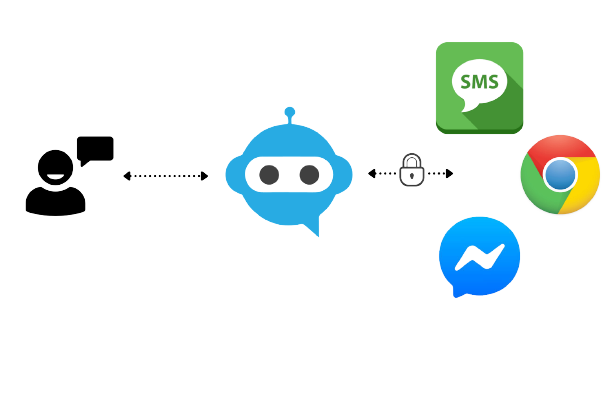

We have developed ways to overcome the vulnerabilities of most common media like SMS, Facebook Messages, and more. When using SmartBot360, it can be used to automatically detect and send a HIPAA-compliant chat through a patient’s native SMS/chat platform that they can follow when they need to provide protected health information.

Our web-based & SMS chatbots are natively HIPAA-compliant and can also be used for live chat. No extra steps are needed to secure them.

SmartBot360’s HIPAA-compliant nature necessitates that it follows all HIPAA requirements – this includes full encryption, availability, logging, strong passwords, employee training, and emergency policies. We also support 2-factor authentication (2FA) for added security and privacy.

HIPAA-Compliant Chat for Website and SMS

The main problem many healthcare providers face when evaluating a chatbot is whether or not it is HIPAA-compliant. When using a non-healthcare chatbot, additional steps to secure the chatbot and chats are usually required.

The second most common question we get is if their patients will use it, and how will they benefit from a chatbot. Some healthcare businesses got up to 20,000 COVID-related questions a day and it saved support staff hours, while some smaller businesses use it for appointment scheduling & pre-visit planning to save time for employees and patients.

Patients generally like getting support as fast as possible, and chatbots offer instant responses in a text interface and can potentially support them without having them wait on the phone. SMS is the leading medium that has the highest use & open rate, as there is no need to download any app. Facebook Messenger or other texting platforms are also popular among users but are not HIPAA-compliant.

A majority of chatbots were not built with HIPAA compliance in mind, and usually require additional effort to ensure compliance, but SmartBot360 was built with HIPAA compliance from day one, storing all communications in separated (by organization) and secure cloud databases.

Working with SmartBot360 offers access to healthcare chatbot experts.

Request a demo to learn how your organization can benefit from a chatbot. See how our chatbot can be customized to be as complex as necessary to improve workflow and patient experience.

We have integrations with commonly used healthcare apps and can help with integrating any other apps your business uses. Contact us if anything is missing and we can work with you to add it.

SmartBot360 HIPAA-Compliant Chatbots & Live Chat

Proprietary state-of-the-art technology for HIPAA-compliant chats

Support 2-factor authentication (2FA)

HIPAA-compliant chat & live chat: exchange sensitive information directly between the patient & the provider

Bypass common vulnerabilities of Facebook Messenger, SMS, and other chat media

Adhere to industry-standard security & privacy policies

Dedicated AWS instances for HIPAA-compliant chat & chatbots

Frequently Asked Questions

+ Are chatbots HIPAA-Compliant?

Typically chatbots are NOT HIPAA-Compliant unless specified otherwise. A HIPAA-Compliant chatbot requires extra work to secure protected health information (PHI) and related data. And in addition to securing PHI, things like encryption in transit and at rest, strong passwords, training for employees, secure audit logs, and more need to be addressed.

SmartBot360 addresses all the mandatory requirements and protects against common vulnerabilities that non-HIPAA-Compliant chats do not.

Companies will have their own dedicated AWS instances, and all chats follow encryption rules, do not store chat logs on a user’s device, and have secure audit logs. Whether you want to use a chatbot for live chat or have it completely automated, no extra work is required to secure the chatbots.

+ Things to note for HIPAA-Compliant Chats?

Some things to note to prevent HIPAA violations:

• Understand PHI, and what needs to be protected

• Note the usage of non-HIPAA-Compliant middlemen (like Facebook, SMS, and more)

that patients can chat or live chat with

• Incorrect storage of patient chat logs

• Unauthorized usage by individuals that may not completely

understand HIPAA compliance

• Signing a Business Associate Agreement with the company that

will handle your PHI.These are just common things we notice for non-healthcare chatbots, but keep this in mind for keeping chats HIPAA-Compliant

+ Is live chat HIPAA-Compliant?

Similar to the requirements an automated chatbot requires to be HIPAA-Compliant, live chats generally follow the same rule. As long as the chats are encrypted, stored correctly, and handles other common vulnerabilities, it can be used for collecting PHI and other sensitive data.

Because the chatbot can be taken over by a live agent whenever, all SmartBot360 live agent chats are going to be HIPAA-Compliant.

+ Is SMS HIPAA-Compliant?

In short, SMS is not HIPAA-Compliant, but if a patient consents to receiving/sending information over SMS, then it is fine.

Whenever a patient needs to provide PHI, but wants to continue with sending their information through SMS, SmartBot360 can automatically ask if they consent to receiving/sending PHI through SMS, if they do not consent, the SMS-based chatbot automatically sends a link to a web chatbot where they can provide their information securely. For reminders, post-procedure follow-up, and non-sensitive chats, it can continue through SMS with SmartBot360 without prompting for SMS consent.

Patients can reply through SMS to continue the conversation with the chatbot and can freely type because the chatbot AI also analyzes SMS to understand and respond accordingly.

+ SmartBot360 AI vs other chatbots?

The data sources an AI engine learns from is an important factor in whether or not an AI can pull the correct information. Most chatbots use one data source of keywords to detect and to have certain responses to those keywords, but this does not work well in cases where patients do not use provided keywords.

SmartBot360’s AI uses data from four sources to have a more comprehensive AI that does not get confused. Aside from setting up the flow diagram, SmartBot360 users can also upload a FAQ sheet that contains keywords and answers, previous chat logs, and pages on their website. AI is important in healthcare chatbots because whenever a patient has an emergency or asks something similar to an existing question, it can answer or direct them to the appropriate page with the next steps to take.

The Smartbot360™ Secure Architecture

Chatbots hosted on websites are natively HIPAA-compliant through SmartBot360’s proprietary secure technology

If a chat starts on a non-HIPAA-compliant medium like Facebook Messenger, Whatsapp or SMS, when protected health information (PHI) must be exchanged, a secure link is automatically sent to seamlessly switch to a HIPAA-compliant chat

HIPAA-compliant live chats whenever an employee needs to take over a chat

No registering or accounts are necessary to use the HIPAA-compliant chatbot. Communication is secure & frictionless

Which media are HIPAA-Compliant?

Chatbot companies allow deploying chatbots on chat platforms, such as Facebook Messenger, WhatsApp, or SMS. But are these chatbots HIPAA-compliant? Or can they be easily made to be HIPAA-compliant?

The answer is NO, due to several reasons.

A key reason for most of the media — including SMS, Messenger, and WhatsApp — is that there is a third party in the middle. For example, employees at Facebook may be able to read your Messenger messages, or the messages may be stored in an unencrypted format there. SMS messages are transmitted in an unencrypted format, and also can be accessed relatively easily (not password-protected) if one has access to the mobile phone.

This basically leaves Web bots (or chatbots hosted in dedicated mobile apps) as the only ones that may potentially be HIPAA-compliant.

For web bots to be HIPAA-compliant, the chatbot platform must follow all HIPAA requirements, like encryption in-transit and at-rest, strong passwords, training for employees, and so on. SmartBot360 maintains HIPAA compliance when switching to a live chat & back.

Common Vulnerabilities Addressed By Smartbot360

Man-in-the-middle

Chatlog stored on the user’s device

Encryption of messages in transit

Encryption of data at rest

Use of external NLP services

Secure audit logs

Sensitive information exchanged between patients and providers with SmartBot360 is all done through our secure, HIPAA-compliant servers with no middleman standing in the way. This means that the most common vulnerabilities of other chat services found on social media (Facebook Messenger, SMS, WhatsApp) are not present in SmartBot360’s technological infrastructure. By supporting full-scale, end-to-end encryption, SmartBot360 strictly adheres to industry-standard security and privacy policies.

If you’re ready to increase patient conversion rates by up to 20% and scale your customer service capabilities, give SmartBot360 a try today. We offer a 30-Day trial and free chatbot building services with no credit card required!

HIPAA-Compliant Live Chat

HIPAA-Compliant live chat for multiple agents

Seamlessly switch to live chat and back to AI when needed

Notify & route to the right person when live chat is requested

SmartBot360 has all the HIPAA-compliant live chat features needed for effective customer service in healthcare. Our chatbot is used to enhance customer service when customer support is not available, but perfect for situations where HIPAA-compliant live chat is needed as well. When a chatbot user reaches a certain point in the flow or requests a customer service representative, the chatbot notifies and routes the chat to the right person to handle the live chat request.

Some ways to take advantage of seamless switching between live chat and chatbot are when a patient finishes pre-appointment questions, a user submits two consecutive questions that the chatbot cannot answer, or a patient asking for more specific appointment questions.

Augment your business’s customer service at all hours with an AI-powered chatbot that seamlessly switches between live chat and back to handle queries instantly with or without live customer service representatives.

Free 30-Day Trial | Free Setup (DIY or We do it for you) | No Credit Card Required